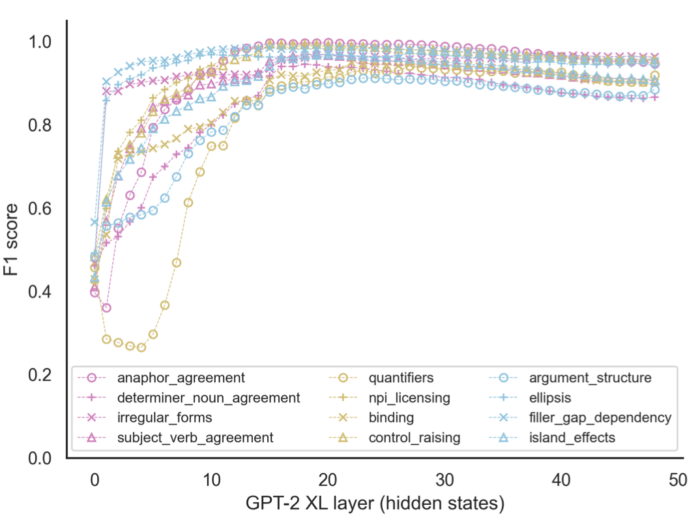

MA student Linyang He leads a team that advances probing methods for large language models by combining a linear decoder with the BLiMP large-scale benchmark of linguistic minimal pairs. The result is a probing method that isolate patterns of layer-wise activation that are sensitive to distinct linguistic phenomena. There is a lot to unpack in the results – please read the paper! – but there are two headlines. First is that network sensitivity to grammatical features emerges early and gradiently (decoding accuracy increases linearly across the first 3rd of layers in GPT2-XL). Second, the slope of this gradient varies across constructions (e.g. the slope for classifying NPI licensing is steeper than the slope for classifying argument structure violations); one predictor for this slope is the syntactic complexity – normalized node count – of each sentence. Read on for more.

He, L., Chen, P., Nie, E., Li, Y., & Brennan, J. R. (2024, March 25). Decoding Probing: Revealing Internal Linguistic Structures in Neural Language Models using Minimal Pairs. Proceedings of LREC-COLING 2024. http://arxiv.org/abs/2403.17299

Abstract

Inspired by cognitive neuroscience studies, we introduce a novel ‘decoding probing’ method that uses minimal pairs benchmark (BLiMP) to probe internal linguistic characteristics in neural language models layer by layer. By treating the language model as the ‘brain’ and its representations as ‘neural activations’, we decode grammaticality labels of minimal pairs from the intermediate layers’ representations. This approach reveals: 1) Self-supervised language models capture abstract linguistic structures in intermediate layers that GloVe and RNN language models cannot learn. 2) Information about syntactic grammaticality is robustly captured through the first third layers of GPT-2 and also distributed in later layers. As sentence complexity increases, more layers are required for learning grammatical capabilities. 3) Morphological and semantics/syntax interface-related features are harder to capture than syntax. 4) For Transformer-based models, both embeddings and attentions capture grammatical features but show distinct patterns. Different attention heads exhibit similar tendencies toward various linguistic phenomena, but with varied contributions.